A single pixel can now carry the weight of a multi-million dollar settlement as the rapid proliferation of generative artificial intelligence rewrites the rules of insurance claims processing across the global market. While traditional scams once required elaborate physical setups or professional forgery, modern policyholders can now manipulate reality with a few taps on a smartphone screen. A comprehensive study by Verisk highlights a troubling trend where AI-powered editing tools are driving a measurable surge in insurance fraud, effectively outmaneuvering legacy detection protocols. This shift is not merely a technological fluke but a symptom of a deeper cultural change in how evidence is perceived and presented. The data reveals a significant generational divide, with younger cohorts such as Generation Z and Millennials showing a much higher propensity to justify digital alterations as a means to “strengthen” their claims. For many, the distinction between aesthetic enhancement and criminal falsification has become dangerously thin, creating a massive ethical gray area for insurers.

The Proliferation: Accessibility and Consumer Complicity

The democratization of high-end image and video manipulation software has removed the technical barriers that once restricted sophisticated fraud to professional crime syndicates or skilled graphic designers. In 2026, consumer-grade smartphone applications offer features that can seamlessly remove objects, alter timestamps, or exaggerate structural damage with photorealistic precision. This widespread availability has fundamentally changed the risk profile of the average policyholder, as 44% of consumers who use these tools now describe the results as indistinguishable from reality. Because these applications are marketed for creative expression and social media presence, many users fail to recognize the legal consequences of applying the same logic to a formal insurance submission. The normalization of digital “touch-ups” in everyday life has created a psychological buffer, where inflating the severity of a fender-bender or a water leak feels like a minor optimization rather than a fraudulent act.

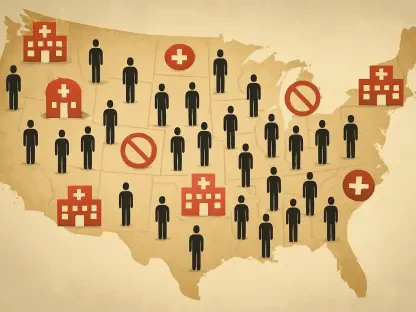

Beyond individual actions, the collective shift toward digital-first claims processing has inadvertently provided a fertile environment for these AI-driven tactics to flourish without immediate scrutiny. Insurance professionals have noted a staggering increase in the volume of manipulated media, with 98% of surveyed claims adjusters identifying AI editing as a primary catalyst for the current surge in fraudulent activity. As these tools become more intuitive, the speed at which fabricated evidence can be produced allows fraudsters to overwhelm claims departments through high-volume submissions. The sophistication of these edits has reached a point where 76% of insurers report that fraudulent submissions are becoming significantly harder to detect through traditional visual inspections. This evolution suggests that the industry is no longer just fighting dishonest individuals but is instead engaged in a structural battle against a technology that is designed to deceive the human eye with increasing efficiency.

The Detection Crisis: Navigating the Confidence Gap

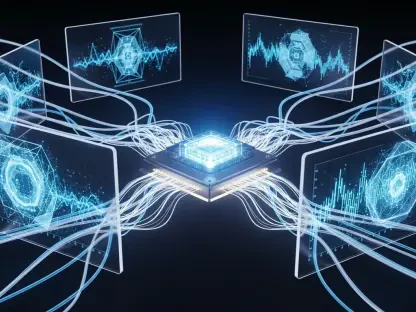

As fraudulent techniques enter a new phase of refinement, the insurance industry finds itself struggling to maintain a technological equilibrium between defense and deception. While many companies have invested heavily in third-party AI detection systems and internal forensic tools, a profound confidence gap remains a significant vulnerability in the modern claims workflow. Data indicates that while over half of insurance professionals feel capable of spotting rudimentary photo edits, only 43% express confidence in their ability to verify the authenticity of media at the scale required for current claim volumes. This uncertainty is exacerbated by the rise of deepfakes, which involve entirely synthetic video or audio recordings that can mimic policyholders or witnesses with terrifying accuracy. Only 32% of professionals feel truly equipped to identify these synthetic creations, suggesting that a vast portion of digital media fraud likely bypasses existing security hurdles without ever being flagged for review.

This lack of certainty among frontline adjusters creates a ripple effect that compromises the integrity of the entire insurance ecosystem by allowing fraudulent payouts to deplete reserve funds. The industry is currently facing a reality where current detection frameworks are failing to keep pace with the rapid innovation cycles of generative models that prioritize realism over factual accuracy. Because many of these detection tools are reactive, they often lag behind the latest iterations of editing software that can bypass standard metadata analysis or compression-based forgery detection. Consequently, a consensus has emerged among industry experts that a substantial percentage of fraudulent activity remains undetected, hidden within the sheer noise of legitimate digital documentation. To address this, insurers are being forced to rethink their reliance on isolated verification steps, recognizing that a more holistic and integrated approach to data integrity is necessary to protect the financial stability of the sector in an era of digital uncertainty.

Strategic Resilience: Building a Verifiable Future

The long-term ramifications of AI-fueled manipulation extend far beyond the immediate balance sheets of insurance providers, ultimately impacting the premiums paid by honest policyholders across the country. Economic projections suggest that approximately 69% of consumers anticipate a rise in insurance costs as a direct result of these fraudulent activities, creating a sense of urgency for industry-wide reform. To mitigate these risks, 45% of insurers are already moving toward implementing much stricter documentation requirements that demand multi-angle verification and live-capture metadata. This transition often necessitates a shift in how claims teams operate, requiring them to adopt roles that resemble digital forensic investigators rather than traditional administrative adjusters. While these measures are essential for security, they also present a challenge in maintaining customer satisfaction, as the additional layers of scrutiny can lead to extended cycle times and more complex interactions for those filing legitimate claims.

Navigating this complex landscape required a departure from siloed operations toward a more collaborative and technologically integrated ecosystem that prioritized shared intelligence and transparency. Industry leaders emphasized that the only way to successfully combat the scale of AI-driven deception was through the adoption of decentralized verification protocols and the standardization of digital evidence tracking. By fostering an environment where data could be cross-referenced across multiple platforms, insurers managed to identify patterns of fraud that were previously invisible to individual detection systems. The focus shifted from merely identifying fake images to establishing a verifiable chain of custody for every piece of documentation submitted. This proactive strategy not only deterred potential fraudsters but also restored a level of trust and efficiency to the claims process. Ultimately, the successful integration of advanced forensic tools allowed the industry to protect its resources while ensuring that legitimate policyholders received the support they needed.