Simon Glairy is a distinguished leader in the insurance sector, currently specializing in the intersection of risk management and advanced AI-driven assessments. With a career rooted in navigating the complexities of large-scale organizational shifts, he has become a leading voice on how legacy institutions can reinvent themselves through technology. His insights offer a masterclass in balancing the rigid requirements of traditional insurance with the agile demands of modern Insurtech, particularly in how data can be transformed from a stagnant asset into a primary driver of commercial growth.

The following discussion explores the strategic overhaul of operating models, the transition from fragmented legacy systems to unified platforms, and the deliberate deployment of artificial intelligence to boost producer productivity.

Managing the transition from hundreds of legacy systems to a single platform often reveals hidden operational complexities. What friction points do you prioritize during this consolidation, and how do you maintain a consistent client experience? Please describe the step-by-step process used to ensure data remains reliable during such a shift.

The primary friction points we address are the “sprawl” of redundant applications—which in large firms can reach upwards of 600 systems—and the inconsistent workflows inherited from years of acquisitions. When you have dozens of different offices using different tools, the client experience becomes fragmented, so we prioritize the rollout of a “single pane of glass” like the Applied Epic platform to create a unified interface. Our process for data reliability starts with a massive rationalization phase where we eliminate duplication and streamline vendor relationships to ensure we aren’t migrating “dirty” or redundant data. Next, we move into a rigorous validation stage where legacy data is mapped to the new architecture, followed by a pilot phase where small user groups test the system in real-world scenarios. Finally, we establish a continuous feedback loop where daily refinements are made based on producer input, ensuring that by the time we scale, the data is not only reliable but actually enhances the speed of service for our clients.

Shifting from external outsourcing to internal engineering teams allows for greater control over innovation. How do you align a specialized engineering team with specific revenue-growth goals, and what anecdotes can you share about building proprietary tools that influence sales velocity? Please elaborate with specific metrics used to track progress.

Aligning a specialized engineering team, like the 20-plus experts we’ve integrated into our innovation centers, requires moving away from general IT support toward revenue-focused product development. We achieve this alignment by embedding these engineers into the business units so they can build proprietary tools that directly address the bottlenecks our 2,800 producers face every day. For instance, by developing internal tools that automate data ingestion, we’ve seen a significant reduction in manual entry, which allows producers to spend more time on client-facing activities. We track our success through very specific metrics, primarily focusing on sales velocity and the amount of new business generated per producer. Seeing a measurable uptick in these figures after a tool’s release proves that our internal engineering isn’t just a cost center, but a genuine engine for organic growth and market share expansion.

High-impact AI applications, such as coverage-gap analysis and contract reviews, are replacing manual tasks. How do you identify which manual processes are ripe for automation, and what is the iterative process for refining these tools? Please explain the feedback loops you use to ensure these tools provide practical value.

We identify processes for automation by looking for tasks that are traditionally time-consuming yet high-value, such as quote comparisons or contract reviews, where human error can lead to significant missed opportunities. Instead of broad experimentation, we use a targeted approach where we scan the market for digital-native benchmarks and then build solutions that fit precisely into our existing workflows. Our iterative process is “pilot-led,” meaning we trial these AI tools with a small group of users who provide daily feedback on the tool’s accuracy and ease of use. This feedback loop is essential because it allows us to refine the algorithms and user interfaces in real-time before a full-scale deployment. By measuring pre- and post-adoption productivity, we ensure that every AI application we launch provides a practical, quantifiable advantage to our teams in the field.

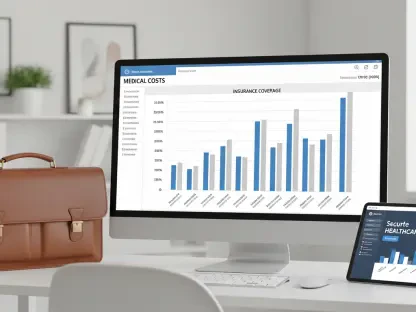

Transitioning to a unified data environment requires bridging the gap between legacy infrastructure and modern platforms. How do you leverage senior leadership to manage this technical debt, and what impact does a “single pane of glass” approach have on commercial advantages? Please provide examples of how this affects client engagement.

Managing technical debt is a leadership challenge as much as a technical one, which is why we recruit veterans from top-tier firms like AIG and Oliver Wyman to oversee the transformation. These leaders provide the strategic oversight needed to bridge the gap between old infrastructure and a unified data environment, ensuring that the “single pane of glass” isn’t just a buzzword but a functional reality. This unified approach gives us a massive commercial advantage because it allows us to see a 360-degree view of the client’s needs, enabling more accurate coverage-gap analysis and cross-selling opportunities. For the client, this translates to a much more proactive relationship; instead of us asking them for the same information multiple times, we show up with data-driven recommendations already in hand. This level of sophistication changes the client’s perception of the agency from a mere service provider to a high-value strategic partner.

A “build and buy” strategy involves balancing internal development with external insurtech partnerships. How do you determine which capabilities should be kept in-house versus sourced externally, and what criteria ensure these tools integrate smoothly? Please share the trade-offs you consider when making these investment decisions.

Our “build and buy” strategy is based on the principle that no single company should try to do everything alone; we keep core, revenue-driving innovations in-house while partnering with Insurtechs for specialized, niche capabilities. We decide to “build” when a tool is central to our unique producer workflow and “buy” when there is a best-in-class market solution that can be integrated via API into our primary platform. The main trade-off we consider is the speed of implementation versus the degree of customization; buying is often faster, but building gives us a proprietary edge that competitors can’t easily replicate. To ensure smooth integration, we apply a strict set of architectural standards that any external tool must meet before it touches our data environment. This balanced approach allows us to stay at the cutting edge of the industry without over-extending our internal resources on solved problems.

What is your forecast for insurance agency digital transformation?

I believe we are entering a phase where the “entrepreneurial growth” model of the past, characterized by fragmented acquisitions, will be completely replaced by “integrated scale.” In the next few years, the most successful agencies will be those that have successfully consolidated their data into a single, actionable asset that can power predictive AI at every level of the business. We will see a shift where the “producer” role evolves into a “data-empowered advisor,” supported by automated ingestion and real-time risk insights that remove nearly all administrative friction. Ultimately, the industry will move away from being a “paper-and-process” business to one defined by technology-driven productivity, where the speed of data dictates the winner of the market. Agencies that fail to unify their platforms now will find themselves unable to compete with the sheer efficiency and insight of those that have built a modern digital foundation.