The catastrophic scale of modern climate events and the digital volatility of the 21st-century economy have rendered traditional, siloed insurance workflows effectively obsolete in the face of rapid change. As multinational corporations navigate a labyrinth of regional regulations and escalating loss severities, the emergence of the Global Claims Management System (GCMS) represents more than just an incremental software update. It is a fundamental architectural shift that attempts to reconcile the vast scale of international commerce with the granular, often messy reality of local physical damage. This review examines how these systems are moving beyond basic database management to become intelligent, predictive ecosystems capable of stabilizing the global risk landscape.

The Foundation of Modern Claims Infrastructure

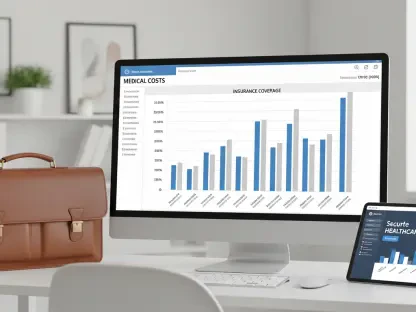

The core principle behind a contemporary claims management system is the centralization of disparate data streams into a single, actionable truth. Historically, the insurance industry operated on fragmented platforms that struggled to communicate across borders, leading to massive inefficiencies and “leakage”—financial losses resulting from poor decision-making or administrative errors. The modern infrastructure solves this by utilizing cloud-native environments that integrate real-time telemetry, legal documentation, and financial reporting. By providing a unified interface, these systems allow stakeholders to visualize a claim’s lifecycle from the moment of first notice of loss to the final settlement.

What makes this implementation unique is its ability to serve as a bridge between the physical and digital worlds. Instead of waiting for a manual paper trail, the system ingests data from IoT devices, satellite imagery, and localized weather reports to validate losses almost instantly. This context is critical because it moves the technology from a passive ledger to an active participant in risk mitigation. In the broader technological landscape, this represents the transition from “Systems of Record” to “Systems of Intelligence,” where the value lies not just in storing data, but in interpreting it to predict the next bottleneck in the supply chain or the next surge in liability.

Core Architectural Features and Functional Components

Integrated Operational Frameworks

At the heart of the most successful systems lies an integrated operational framework that effectively dissolves the traditional barriers between regional offices. This is not merely a shared server; it is a sophisticated logic engine that applies consistent global standards while allowing for local flexibility. For example, a claim filed in Singapore might trigger different regulatory compliance checks than one filed in Germany, yet the overarching financial reporting remains uniform for the headquarters. This dual-layer logic ensures that multinational firms do not lose the forest for the trees, maintaining high-level visibility without sacrificing the precision required for local legal nuances.

The performance of these frameworks is measured by their ability to reduce the “cycle time”—the duration from loss to resolution. By automating the routing of claims based on complexity and adjuster expertise, the system ensures that high-value technical losses are flagged for human experts immediately, while routine claims are processed through automated straight-through processing. This prioritization is vital in a market where the speed of capital injection can determine whether a business survives a catastrophe or collapses under the weight of its own halted operations.

Modular Third-Party Administration Services

The rise of modularity within Third-Party Administration (TPA) services represents a significant shift toward a “claims-as-a-service” model. Rather than forcing a client into a rigid, one-size-fits-all package, modern systems allow for a “pick-and-pay” approach where modules for intake, investigation, or litigation management can be activated independently. This technical flexibility is crucial for corporate entities that choose to self-insure a portion of their risk but require professional infrastructure to manage the actual processing of incidents.

In practice, this modularity allows for rapid scaling during surge events, such as a hurricane or a massive cyber breach. Because the components are decoupled, a firm can integrate its own internal legal team into the system’s litigation module while outsourcing the field adjusting to a network of global experts. This hybridization is what separates current technology from its predecessors; it acknowledges that the modern risk manager needs a toolkit, not a locked box. It turns claims management into a strategic financial lever rather than just an administrative burden.

Current Trends and Technological Shifts

The most impactful trend currently reshaping the industry is the deep integration of Large Language Models (LLMs) and specialized AI to handle the unstructured data that has long plagued insurance. Until recently, adjusters spent countless hours reading through thousands of pages of medical reports or construction contracts. Today’s systems employ cognitive processing to summarize these documents, highlight discrepancies, and even suggest settlement ranges based on decades of historical data. This shift is not about replacing the human adjuster but about augmenting their capability to handle more complex scenarios with greater accuracy.

Moreover, there is a visible move toward “proactive claims management,” where the system uses predictive analytics to warn clients of potential losses before they occur. By analyzing patterns in sensor data or global shipping delays, the technology can suggest preventative measures. This represents a shift in consumer behavior where the insurer is no longer just a source of indemnity after a disaster, but a partner in risk prevention. The industry is moving from a reactive “repair and replace” mindset to a proactive “predict and prevent” philosophy.

Real-World Applications and Sector Deployment

Managing Large-Scale Multinational Losses

When a multinational corporation suffers a synchronized loss—such as a cyberattack affecting offices in twenty countries—the GCMS becomes the primary command-and-control center. In these scenarios, the technology must manage multiple currencies, languages, and time zones simultaneously. The ability of the system to aggregate these losses into a single global exposure report allows the CFO to understand the total impact on the balance sheet in real-time. This application is particularly critical in sectors like manufacturing or energy, where a single localized failure can have cascading effects across a global supply chain.

Specialized Technical Adjusting in the London Market

The London Market, specifically the Lloyd’s ecosystem, remains the ultimate testing ground for these systems. Here, the complexity of risks—ranging from space satellites to marine hulls—demands a level of technical adjusting that basic automation cannot provide. The GCMS facilitates this by connecting specialized London brokers with on-the-ground adjusters worldwide, ensuring that the specific wording of a London-market policy is strictly adhered to, regardless of where the damage occurred. This synergy between high-end technical expertise and global digital reach is the primary reason the London Market has remained the epicenter of specialty insurance.

Challenges and Adoption Barriers

Despite the obvious benefits, the path to global adoption is fraught with technical and regulatory hurdles. The most significant obstacle is data sovereignty. Many nations have enacted strict laws requiring that data generated within their borders stay within those borders, which complicates the goal of a truly unified global platform. Balancing the need for a “single pane of glass” view with the requirement for decentralized data storage is a complex architectural challenge that requires sophisticated encryption and localized cloud instances.

Furthermore, there is the persistent “talent gap” within the industry. While the technology is advancing rapidly, the pool of professionals who possess both the technical adjusting skills and the digital literacy to operate these systems is shrinking. This creates a bottleneck where the software’s potential is limited by the human capacity to direct it. Ongoing development is focusing on making the user interfaces more intuitive and using AI to provide “on-the-job” guidance to newer adjusters, effectively codifying the knowledge of retiring veterans into the system’s own logic.

Future Outlook and Developmental Trajectory

The trajectory of claims management is clearly moving toward a “touchless” future for low-complexity events, while high-complexity losses will become increasingly data-driven. We are likely to see the integration of digital twins—virtual replicas of physical assets—that allow adjusters to virtually “walk through” a damaged building from thousands of miles away using VR headsets. This will not only reduce the carbon footprint associated with global travel but will also allow for much faster loss assessments in hazardous environments where human entry is restricted.

Long-term, these systems will likely become the backbone of a global resiliency network. By sharing anonymized data on loss patterns, the industry could create a feedback loop that informs safer building codes, better cybersecurity protocols, and more robust supply chain designs. The impact will extend beyond the insurance sector, potentially lowering the overall cost of risk for society and making the global economy more resilient to the inevitable shocks of the future.

Final Assessment and Summary

The evaluation of global claims management systems revealed a technology that has successfully transitioned from a back-office utility to a frontline strategic asset. By integrating modular TPA services with robust operational frameworks, the industry addressed the critical need for both scale and specialized expertise. The analysis showed that while technical barriers like data sovereignty remain, the move toward AI-augmented decision-making and proactive risk prevention is irreversible. These systems redefined the value of insurance by focusing on data-driven intelligence rather than just financial reimbursement.

Ultimately, the most successful implementations were those that treated technology as an enhancer of human judgment, rather than a replacement for it. The future success of this sector depended on the ability of firms to bridge the talent gap while continuing to innovate in areas like digital twins and real-time telemetry. By stabilizing the volatile process of loss recovery, these platforms proved to be the invisible infrastructure keeping the global economy functional during times of crisis. The shift toward a more integrated, transparent, and predictive claims environment provided the necessary foundation for the next decade of industrial and commercial growth.