Across factories, warehouses, and clinics, hidden strains quietly sap strength long before a claim is filed, yet the signals are measurable if leaders know where and how to look. Every year, musculoskeletal disorders persist as a stubborn drag on performance, morale, and cost control, even in well-run operations that meet regulatory requirements. The underlying issue is rarely a single dramatic lift or a one-off awkward reach; it is the accumulation of small compromises—posture, force, repetition—embedded in everyday tasks. The result is a pattern of “invisible” exposure that becomes visible only when pain forces time off or mounting claims push safety to the forefront. That is why risk discovery now hinges on making strain tangible, quantifiable, and comparable, then matching the right expertise and interventions to each job with precision. Carriers, led by voices like Dan Campany, Head of Risk Services at The Hartford, have leaned into this change by coupling AI-enabled diagnostics with human-centered design and behavior reinforcement.

Why MSDs Stay Invisible and Costly

The complexity of musculoskeletal exposure stems from ordinary motions that appear innocuous until they accumulate into injury, so risk often hides in the tempo of production rather than in dramatic errors. Back strains emerge from half-turns while holding weight at mid-torso, wrists inflame during rapid fixture swaps, and shoulders pay for overhead reaches that shave seconds from cycle time. These micro-decisions trade biomechanical margin for perceived efficiency, and they frequently go unchallenged because the work still gets done. When discomfort surfaces, it is easy to attribute it to individual variation rather than job design. Meanwhile, the costs climb in multiple directions: direct medical spend, comp payouts, overtime to cover absences, retraining of floaters, and throughput slowdowns as teams adjust to resource gaps.

The human side is equally consequential and usually precedes statistics: pain dulls attention, erodes confidence in management’s priorities, and feeds disengagement that ripples across crews. Even with solid lagging indicators, leaders lack a crisp picture of what, exactly, needs to change on the floor. Without a granular view of posture, force, and frequency, debates about fixes drift into generalities—more training, slower pace, better reminders—none of which remove the root cause. The result is a cycle where interventions depend on anecdote and the loudest complaint rather than objective evidence. Breaking that cycle requires a diagnostic method that can expose risks baked into routine tasks, rank them by severity and reach, and translate findings into a shared operational language that safety, operations, and finance accept as credible.

Why Traditional Ergonomics Struggle to Scale

Legacy ergonomic reviews rely on human expertise delivered on-site, which ensures nuance but limits reach, particularly across dispersed networks or high-mix environments. An expert can observe a job, coach a worker, and spot layout quirks no algorithm catches, yet each visit consumes time and budget. For small employers or multi-location operations, this creates an access gap: the places most in need of insight often have the least bandwidth to procure it. Travel and scheduling bottlenecks further narrow the window, so assessments skew toward the jobs already known to be problematic rather than the tasks where latent exposure is highest. Over a year, the net effect is a patchwork of good fixes and blind spots that persist because there is no systematic way to scan broadly and decide where to focus first.

The prioritization challenge compounds the scale problem. Executives ask where the greatest risk sits and why a proposed fix beats cheaper alternatives, and credible answers depend on comparative data. Traditional methods can produce thorough reports, but they are not always designed for rapid, side-by-side triage across dozens of jobs. That slows decisions and suppresses momentum, especially when the case for investment competes with line upgrades or hiring. In practice, teams default to incremental adjustments—move a bin, add a mat, deliver refresher training—because those choices are easier to approve and deploy. Without a fast, repeatable way to show how one task’s strain eclipses another’s, capital flows to the visible or the vocal, not necessarily the most consequential.

How AI Computer Vision Makes Risk Visible

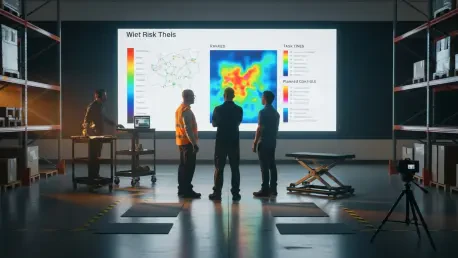

Short videos recorded on ordinary phones have changed the conversation by turning ordinary work into analyzable motion, down to joint angles and forces on specific body segments. AI-powered computer vision maps a worker’s posture and movement frame by frame, applies biomechanics and physics to estimate loads, and assigns a numerical risk score that aligns with a color-coded visualization of the body. A red band at the lower back during a pallet lift, for example, focuses attention on lumbar strain; a yellow shoulder during overhead kitting points to elevation and reach; a green knee during a crouch signals acceptable range. Because the footage is job-specific, frontline teams recognize their reality in the output, and because the metric is consistent across tasks, leaders can rank opportunities without long deliberation.

This quantification does more than validate concerns—it creates a common language that accelerates decisions. Safety managers can show before-and-after clips to illustrate how a handle relocation cuts wrist deviation, while operations leaders can see how a mechanical assist not only reduces red zones but also smooths cycle variance. The immediate visibility helps defuse the “it depends” dynamic that often bogs down ergonomics discussions. It also opens doors for rapid pilots: record a minute or two of a task, run the analysis, test a low-cost change like adjusting bench height, then re-record to verify the delta. In practice, that rhythm supports iterative improvement without waiting for a full audit cycle, and it lets geographically scattered sites participate because all that is needed at the start is a phone, a stable vantage point, and a short clip.

The Limits of Automation and the Case for a Tiered Model

Technology excels at diagnosis and comparison, but solutions still live in the realm of context, trade-offs, and human factors, where seasoned ergonomists thrive. An algorithm can flag excessive trunk flexion; it cannot, on its own, redesign a line to preserve takt time while clearing a conveyor and balancing reach zones. The intervention might be as light as a platform and a part bin flip, or as involved as a mechanical lift, end-effector redesign, and revised standard work. Each choice carries capital cost, maintenance implications, training needs, and cultural adoption challenges. That is why The Hartford has treated the AI output as a catalyst and kept expert judgment at the center, with ergonomists translating risk patterns into fit-for-purpose changes that work in real plants, clinics, and distribution centers.

To extend reach without diluting quality, a tiered service model aligns resources to the complexity and severity of the job. At the entry level, self-service diagnostics invite insured employers to capture and submit videos, receive risk scores and visualizations, and hold a structured conversation about what to try first. For moderate complexity, trained generalist risk consultants handle common scenarios—bench setup, material presentation, push–pull dynamics—while tapping ergonomists for coaching and review. High-severity or intricate operations trigger direct involvement from specialist ergonomists who conduct deeper studies, coordinate with engineering on tooling or layout, and guide change management. By matching path to need, the model lowers barriers for small employers and preserves scarce specialist time for the cases where it moves the needle most.

From Diagnosis to Action: A Practical Workflow

A repeatable loop turns insight into measurable impact: diagnose, prioritize, implement, re-measure, and iterate. Diagnosis starts with targeted video capture of representative cycles, ideally across shifts and operators to reduce bias. The resulting scores and heatmaps point to hotspots—excessive lumbar flexion during tote transfers, for instance—that can be compared across roles and sites. Prioritization then weighs exposure severity against reach and feasibility: a red-rated task performed by many workers every shift outranks a yellow-rated job done occasionally by a small team. This stage benefits from a cross-functional huddle, so safety, operations, and maintenance align on what can be piloted quickly versus what demands engineering support or capital approval.

Implementation should mirror the complexity of the hazard. Low-friction adjustments might include repositioning materials to the power zone, installing turntables to reduce twisting, or adding casters to enable push over carry. Engineering controls scale up from height-adjustable benches and vacuum lifts to line rebalancing or automated assists. After changes, re-measurement verifies both ergonomic gain and operational effect: a lower risk score, a tighter cycle time distribution, fewer quality escapes linked to fatigue. That evidence fuels the iterate step, where the team moves to the next-ranked task, armed with a local case study and a growing playbook. Over time, this cadence converts ergonomics from a reactive fix into an operational competency with objective checkpoints rather than one-off audits.

Proof in Practice: Safety Gains With Operational Upside

Consider a manufacturing line where roughly half of annual injury claims traced back to repetitive handling at a subassembly station, and the day-to-day signal was a cluster of reported soreness and sporadic lost time. Video analysis across three shifts showed consistent red zones at the lower back during bin-to-fixture transfers and yellow shoulders during overhead reach to ancillary components. The ergonomist-guided recommendation combined a mechanical lift for heavier parts, a 90-degree part presentation turn, and a handle relocation on a frequently gripped fixture. The capital outlay was modest relative to a full automation package, installation fit a planned downtime window, and change management focused on brief training and standard work updates.

Post-implementation clips revealed a decisive shift: lumbar red bands fell into the yellow–green range, shoulder elevation dropped within acceptable angles, and cycle time variability narrowed as strain-induced hesitations disappeared. Over the subsequent quarters, the site reported fewer injury reports tied to the station, reduced lost time, and steadier staffing without last-minute reassignments. Just as significant, throughput improved because the lift and layout reduced rework on misaligned components, and supervisors spent less time juggling coverage. The business case, once made around safety, gained reinforcement from quality and productivity wins, creating internal support for the next round of changes on adjacent tasks. The lesson was clear: engineering controls that remove a root cause often pay for themselves through both avoided injuries and smoother operations.

When Behavior Matters: Training, Monitoring, and Nudges

Engineering cannot eliminate every exposure, especially in dynamic environments like last-mile delivery, patient handling, or service work where posture and pace vary by context. Residual risk then becomes a behavior question: how to sustain safer technique under real pressures. Effective programs blend targeted coaching, supervisor cues, and simple environmental prompts that make the desired action the easy action. For example, a delivery team might adopt a “one hand checks, two hands lift” rule backed by staging heights and grip aids, while supervisors use brief pre-shift huddles to reinforce posture cues tied to that day’s route demands. Video diagnostics help here as well, anchoring coaching in concrete visuals rather than abstract reminders.

Behavioral economics adds staying power through feedback and incentives calibrated to avoid perverse effects. Telematics-inspired dashboards can reflect adherence to key behaviors, such as using a cart instead of carrying or maintaining neutral wrist angles at a bench. Nudges—like placing tools at the default height or color-coding zones for reach—reduce cognitive load, while recognition programs spotlight teams that sustain improvements over months, not days. Importantly, monitoring should be framed as support, not surveillance, with a clear link to comfort and performance. Without ongoing reinforcement, drift returns and risk creeps back. With it, organizations preserve the gains from engineering changes and prevent backsliding as new hires come onboard or production ramps shift.

Implications for Employers and the Insurer’s Evolving Role

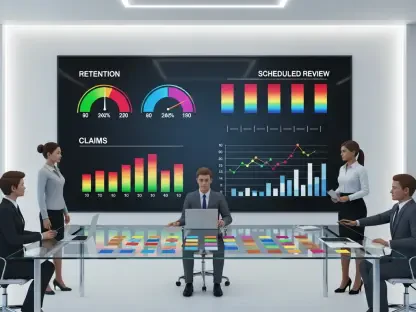

For employers deciding where to begin, the most practical step had been to select one high-impact job and run the full loop on a tight timeline: capture short videos, secure a baseline score, pilot a right-sized control, and re-measure to verify the win. That early proof point then supported a tiered playbook that clarified decision paths: self-service diagnostics for low-stakes tasks, generalist-led improvements with ergonomic oversight for moderate jobs, and direct specialist engagement for complex or high-severity exposures. Investment choices had been guided by both safety and performance, with leaders explicitly counting reduced claims, steadier staffing, tighter cycle times, and fewer quality escapes. Insurers, exemplified by The Hartford’s prevention-forward stance, had functioned as partners in this process, aligning risk services with measurable outcomes and reserving expert resources where they mattered most.

Looking ahead, the actionable priorities were straightforward. Build a measurement culture that pairs risk scores with operational KPIs, so each intervention generated a clear before-and-after story. Standardize video capture and review as part of new job design and post-change verification, not just after an injury. Codify thresholds that trigger escalation to ergonomists, ensuring complex cases received depth without delaying simpler fixes. Layer behavior reinforcement on top of engineering changes from day one, using nudges, cues, and recognition to protect gains. Finally, treat ergonomics as an operational lever: integrate it into capital planning, maintenance schedules, and talent onboarding. Done this way, MSD prevention had shifted from a cost center to a performance asset, and AI diagnostics plus human expertise had proved to be the practical bridge that turned hidden strain into sustained improvement.